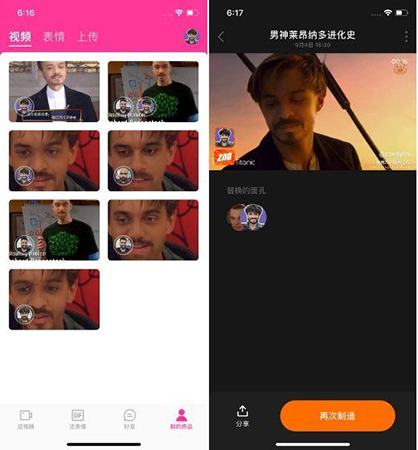

It gets harder as the technology improves. Photograph: Imaginechina/SIPA USA/PA Images How do you spot a deepfake? There’s even a mobile phone app, Zao, that lets users add their faces to a list of TV and movie characters on which the system has trained.Ĭhinese face-swapping app Zao has caused privacy concerns. Several companies will make them for you and do all the processing in the cloud. That said, plenty of tools are now available to help people make deepfakes. But it takes expertise, too, not least to touch up completed videos to reduce flicker and other visual defects. This reduces the processing time from days and weeks to hours. Most are created on high-end desktops with powerful graphics cards or better still with computing power in the cloud. It is hard to make a good deepfake on a standard computer. Governments might be dabbling in the technology, too, as part of their online strategies to discredit and disrupt extremist groups, or make contact with targeted individuals, for example. Who is making deepfakes?Įveryone from academic and industrial researchers to amateur enthusiasts, visual effects studios and porn producers. Given enough cycles and feedback, the generator will start producing utterly realistic faces of completely nonexistent celebrities. But repeat the process countless times, with feedback on performance, and the discriminator and generator both improve. At first, the synthetic images will look nothing like faces. This synthetic image is then added to a stream of real images – of celebrities, say – that are fed into the second algorithm, known as the discriminator. The first algorithm, known as the generator, is fed random noise and turns it into an image. A Gan pits two artificial intelligence algorithms against each other. Photograph: Alexandra Robinson/AFP via Getty ImagesĪnother way to make deepfakes uses what’s called a generative adversarial network, or Gan. For a convincing video, this has to be done on every frame.Ĭomparing original and deepfake videos of Russian president Vladimir Putin.

The decoder then reconstructs the face of person B with the expressions and orientation of face A. For example, a compressed image of person A’s face is fed into the decoder trained on person B. To perform the face swap, you simply feed encoded images into the “wrong” decoder. Because the faces are different, you train one decoder to recover the first person’s face, and another decoder to recover the second person’s face. A second AI algorithm called a decoder is then taught to recover the faces from the compressed images. The encoder finds and learns similarities between the two faces, and reduces them to their shared common features, compressing the images in the process. First, you run thousands of face shots of the two people through an AI algorithm called an encoder. It takes a few steps to make a face-swap video. The videos swapped the faces of celebrities – Gal Gadot, Taylor Swift, Scarlett Johansson and others – on to porn performers. But deepfakes themselves were born in 2017 when a Reddit user of the same name posted doctored porn clips on the site. University researchers and special effects studios have long pushed the boundaries of what’s possible with video and image manipulation. Photograph: The Washington Post via Getty Images How are they made? Similar scams have reportedly used recorded WhatsApp voice messages.Ī comparison of an original and deepfake video of Facebook chief executive Mark Zuckerberg. The company’s insurers believe the voice was a deepfake, but the evidence is unclear. Last March, the chief of a UK subsidiary of a German energy firm paid nearly £200,000 into a Hungarian bank account after being phoned by a fraudster who mimicked the German CEO’s voice.

Another LinkedIn fake, “Katie Jones”, claimed to work at the Center for Strategic and International Studies, but is thought to be a deepfake created for a foreign spying operation.Īudio can be deepfaked too, to create “ voice skins” or ” voice clones” of public figures. A non-existent Bloomberg journalist, “Maisy Kinsley”, who had a profile on LinkedIn and Twitter, was probably a deepfake. Deepfake technology can create convincing but entirely fictional photos from scratch. As Danielle Citron, a professor of law at Boston University, puts it: “Deepfake technology is being weaponised against women.” Beyond the porn there’s plenty of spoof, satire and mischief. As new techniques allow unskilled people to make deepfakes with a handful of photos, fake videos are likely to spread beyond the celebrity world to fuel revenge porn. A staggering 96% were pornographic and 99% of those mapped faces from female celebrities on to porn stars. The AI firm Deeptrace found 15,000 deepfake videos online in September 2019, a near doubling over nine months.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed